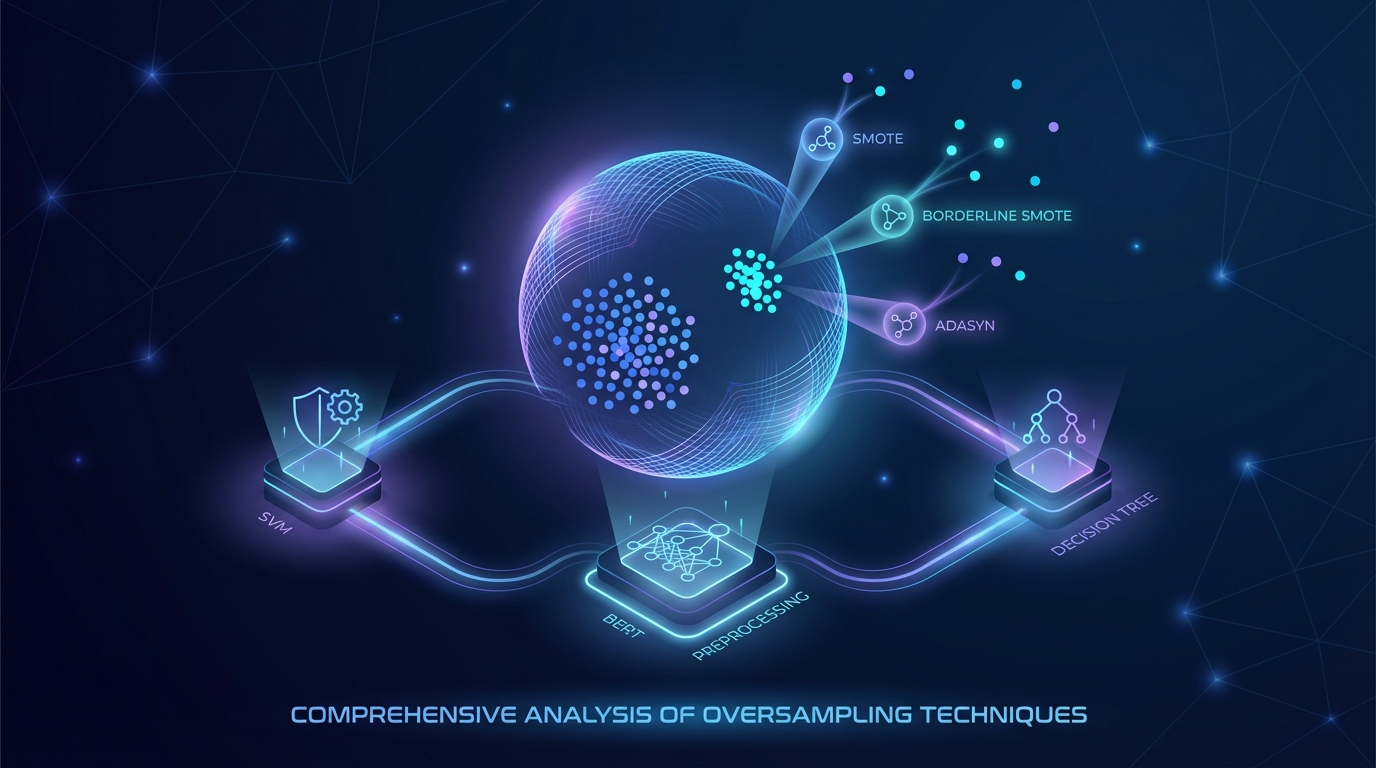

Comprehensive Analysis of Oversampling Techniques for Addressing Class Imbalance Employing Machine Learning Models

Image generated by Gemini AI

A study evaluates oversampling techniques (SMOTE, Borderline SMOTE, ADASYN) to address class imbalance in machine learning. Utilizing BERT for preprocessing, it analyzes models like SVM, Decision Tree, and Logistic Regression. Notably, SVM with Borderline SMOTE achieved 71.9% accuracy and an MCC of 0.53, highlighting improved model performance.

Analysis of Oversampling Techniques Reveals Insights for Class Imbalance in Machine Learning

Recent research has delved into the effectiveness of oversampling techniques in addressing class imbalance in machine learning datasets, revealing significant gains in model performance. The study focused on methods such as SMOTE (Synthetic Minority Oversampling Technique), Borderline SMOTE, and ADASYN (Adaptive Synthetic Sampling), tested in conjunction with various machine learning algorithms including Support Vector Machine (SVM) and Decision Tree.

Key Findings

The experiment yielded notable results, particularly with the SVM model using Borderline SMOTE, which achieved an accuracy rate of 71.9% and a Matthews Correlation Coefficient (MCC) of 0.53. These metrics indicate a significant improvement in the model’s ability to accurately classify instances across both majority and minority classes.

Related Topics:

📰 Original Source: https://doi.org/10.2174/0126662558347788241127051934

All rights and credit belong to the original publisher.