Diffusion-Pretrained Dense and Contextual Embeddings

Image generated by Gemini AI

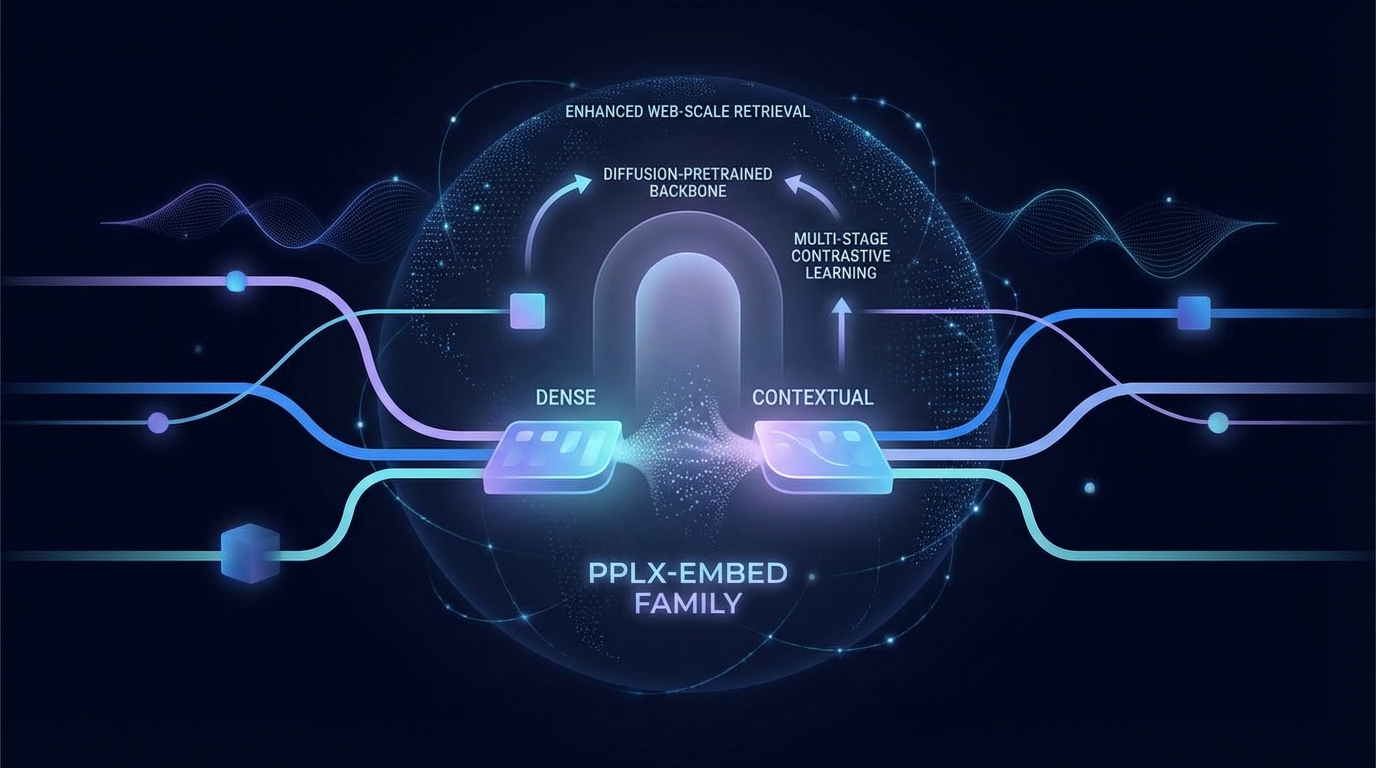

The new pplx-embed family of multilingual embedding models utilizes multi-stage contrastive learning on a diffusion-pretrained backbone for enhanced web-scale retrieval. Two variants are released: pplx-embed-v1 for standard tasks and pplx-embed-context-v1 for contextual embeddings. The latter excels on the ConTEB benchmark, while both models perform well across several other retrieval benchmarks and internal evaluations, indicating their reliability for large-scale search applications.

New Multilingual Embedding Models Set to Transform Web-Scale Retrieval

Researchers have unveiled pplx-embed, a series of multilingual embedding models designed to enhance web-scale retrieval processes. Utilizing a multi-stage contrastive learning approach on a diffusion-pretrained language model, these models aim to efficiently capture context within lengthy passages.

The pplx-embed models employ a bidirectional attention mechanism that facilitates comprehensive understanding of document context. Two variants have been released: pplx-embed-v1, optimized for standard retrieval tasks, and pplx-embed-context-v1, which offers contextualized embeddings that integrate broader document context into individual passage representations.

Performance Highlights

The pplx-embed-v1 model has demonstrated competitive performance across several prominent benchmarks, including:

- MTEB (Multilingual, v2)

- MTEB (Code)

- MIRACL

- BERGEN

- ToolRet

Notably, the pplx-embed-context-v1 model has achieved record-setting results on the ConTEB benchmark, which evaluates contextual understanding.

Real-World Applications

Beyond formal benchmarks, the pplx-embed-v1 model has shown robust performance in internal evaluations that simulate real-world search scenarios, assessing effectiveness on tens of millions of documents. This underscores its potential for enhancing retrieval quality and efficiency in production settings.

Related Topics:

📰 Original Source: https://arxiv.org/abs/2602.11151v1

All rights and credit belong to the original publisher.