Jet-RL: Enabling On-Policy FP8 Reinforcement Learning with Unified Training and Rollout Precision Flow

Image generated by Gemini AI

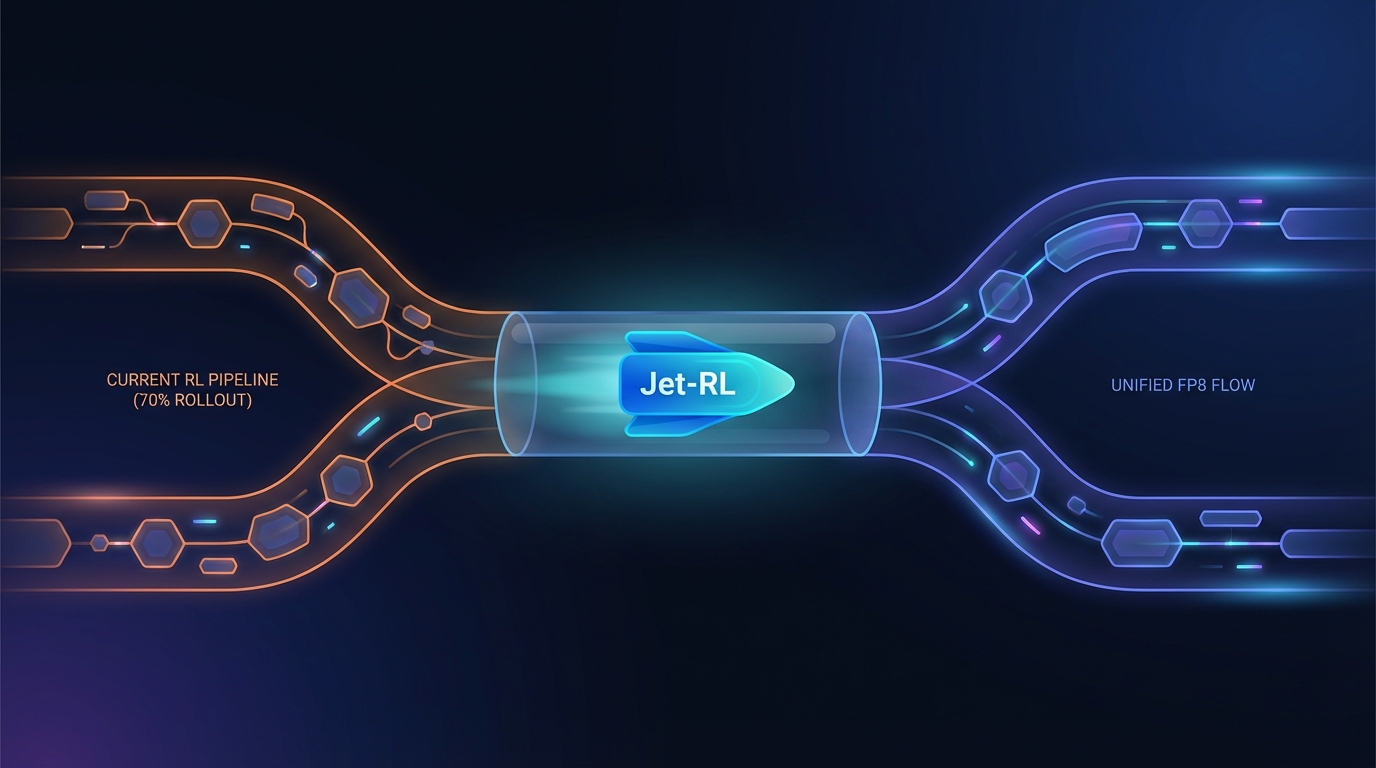

Research highlights the inefficiencies of current reinforcement learning (RL) pipelines for large language models, where rollout phases consume over 70% of training time. A study reveals that the common BF16 + FP8 strategy leads to instability and accuracy loss. Introducing Jet-RL, which uses unified FP8 precision for training and rollout, improves efficiency by up to 41% and stabilizes convergence with minimal accuracy impact.

Jet-RL Revolutionizes Reinforcement Learning with Unified FP8 Training

Jet-RL is a novel framework that streamlines the reinforcement learning (RL) training process by utilizing FP8 precision for both training and rollout phases. This approach enhances computational efficiency, addressing high resource consumption in traditional RL pipelines.

Current methodologies utilizing BF16 precision for training and FP8 for rollout often suffer from instability and accuracy collapse during long-horizon rollouts. Jet-RL minimizes numerical discrepancies between training and inference, eliminating the need for inter-step calibration.

Performance Metrics of Jet-RL

Extensive experiments confirm Jet-RL's effectiveness, showcasing:

- Rollout Speedup: Up to 33% faster rollout times.

- Training Speedup: Up to 41% faster training phases.

- End-to-End Speedup: 16% overall acceleration compared to traditional BF16 training.

These improvements occur while maintaining stable convergence across various tasks, with negligible accuracy degradation, marking a crucial advancement for developers working with large language models (LLMs).

Addressing Training Instability

The research highlights that instability in the BF16 + FP8 strategy is due to the off-policy nature of the training method, hindering RL applications in complex reasoning tasks. By adopting Jet-RL’s unified approach, practitioners can expect a more reliable training process, enhancing LLM performance in real-world applications.

Related Topics:

📰 Original Source: https://arxiv.org/abs/2601.14243v1

All rights and credit belong to the original publisher.