Multi-RADS Synthetic Radiology Report Dataset and Head-to-Head Benchmarking of 41 Open-Weight and Proprietary Language Models

Image generated by Gemini AI

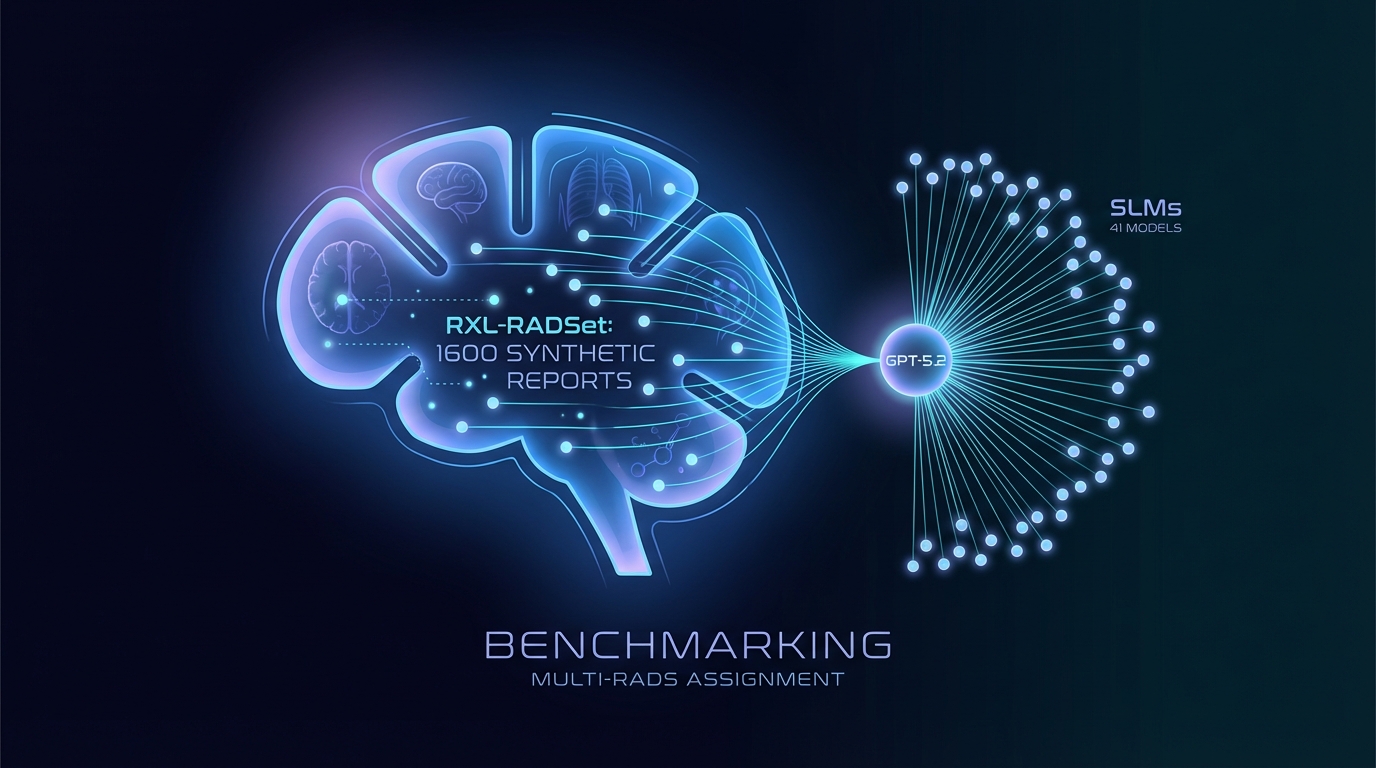

Researchers have developed RXL-RADSet, a benchmark of 1,600 synthetic radiology reports, to enhance automated RADS assignment. It compares 41 small language models (SLMs) with GPT-5.2 for accuracy and validity. GPT-5.2 reached 99.8% validity and 81.1% accuracy, outperforming SLMs, which showed 96.8% validity and 61.1% accuracy. Performance improved with model size and guided prompts, yet challenges remain for complex RADS frameworks.

Multi-RADS Benchmarking Dataset Released for Language Models in Radiology

A new dataset aimed at enhancing radiology risk communication has been launched, featuring a benchmark of synthetic radiology reports across multiple Reporting and Data Systems (RADS). Known as RXL-RADSet, it includes 1,600 synthetic reports verified by radiologists, designed to assess the performance of various language models in automated RADS assignment.

Performance Evaluation of Language Models

The study evaluated 41 quantized small language models (SLMs) with parameter sizes ranging from 0.135 to 32 billion, alongside the proprietary model GPT-5.2. The primary metrics for assessment were the validity and accuracy of RADS assignments.

Results indicated that GPT-5.2 achieved 99.8% validity and 81.1% accuracy across 1,600 predictions. In contrast, the pooled SLMs produced a validity of 96.8% and an accuracy of 61.1% from a total of 65,600 predictions. The top-performing SLMs in the 20-32 billion parameter range approached 99% validity and achieved mid-to-high 70% accuracy rates.

The analysis revealed a trend where performance improved with model size, particularly noting an inflection point between models with less than 1 billion parameters and those with 10 billion or more. However, the complexity of RADS frameworks significantly impacted performance, with higher complexity leading to classification challenges rather than invalid outputs.

Guided prompting enhanced both validity and accuracy, with validity scores reaching 99.2% compared to 96.7% with zero-shot prompting.

Related Topics:

📰 Original Source: https://arxiv.org/abs/2601.03232v1

All rights and credit belong to the original publisher.