PixelGen: Pixel Diffusion Beats Latent Diffusion with Perceptual Loss

Image generated by Gemini AI

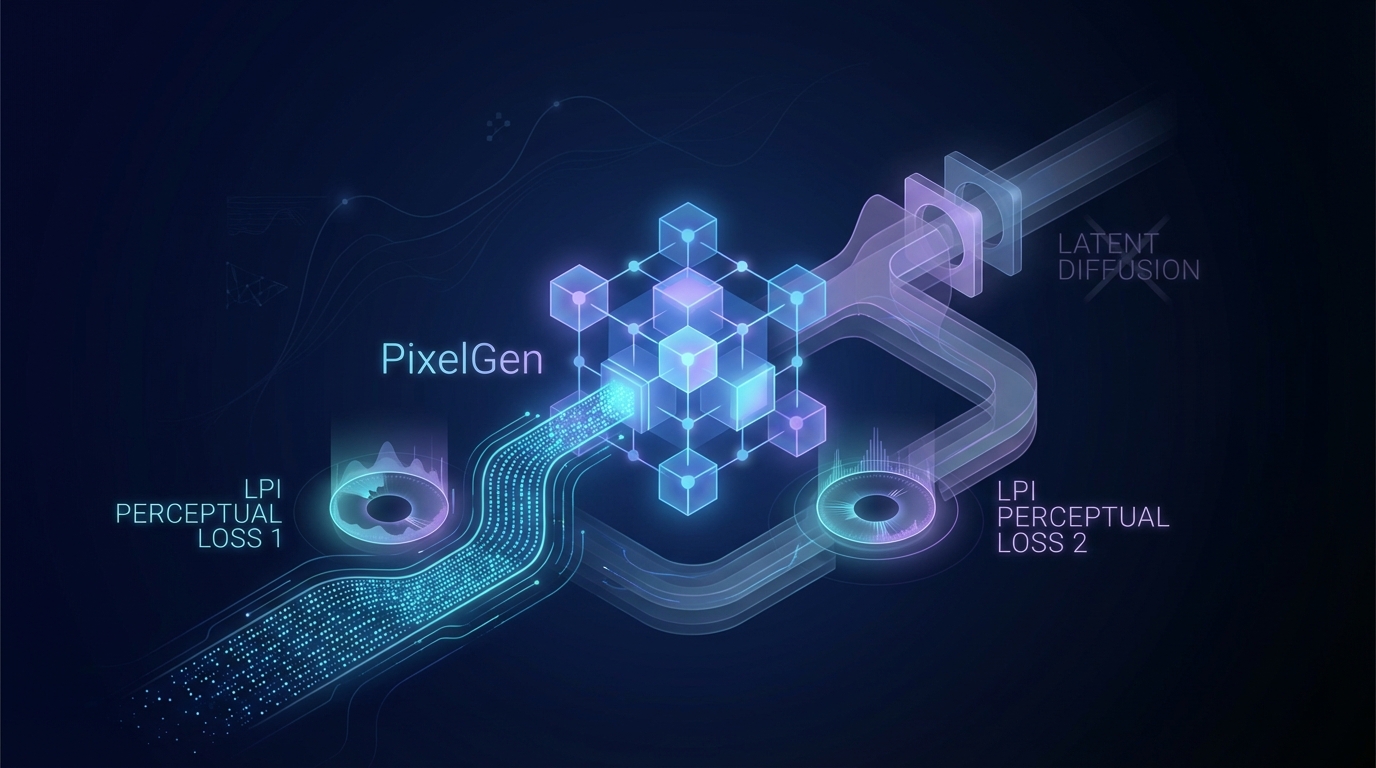

PixelGen is a novel pixel diffusion framework that bypasses the limitations of traditional two-stage latent diffusion models by optimizing directly in pixel space. It employs two perceptual losses—LPIPS for local patterns and DINO for global semantics—to enhance image quality. PixelGen achieves a competitive FID of 5.11 on ImageNet-256 with just 80 training epochs and shows strong performance in large-scale text-to-image tasks, evidenced by a GenEval score of 0.79. This approach eliminates the need for VAEs and auxiliary stages, offering a streamlined and effective generative model. Full code is available at GitHub.

PixelGen Outperforms Latent Diffusion with Innovative Perceptual Loss

PixelGen, a novel pixel diffusion framework, has demonstrated superior performance over traditional latent diffusion models by incorporating perceptual supervision. This advancement allows for direct image generation in pixel space, eliminating artifacts and bottlenecks associated with two-stage latent diffusion processes.

Key Performance Metrics

In rigorous testing, PixelGen achieved a Fréchet Inception Distance (FID) score of 5.11 on the ImageNet-256 dataset, without employing classifier-free guidance and utilizing only 80 training epochs. This marks a significant improvement over existing latent diffusion baselines.

Moreover, PixelGen showcased impressive scaling capabilities in text-to-image generation tasks, achieving a GenEval score of 0.79. The framework's design eliminates the need for variational autoencoders (VAEs) and latent representations, thereby streamlining the generative process.

Availability

Developers and researchers can access the code publicly at this GitHub repository.

Related Topics:

📰 Original Source: https://arxiv.org/abs/2602.02493v1

All rights and credit belong to the original publisher.