UniX: Unifying Autoregression and Diffusion for Chest X-Ray Understanding and Generation

Image generated by Gemini AI

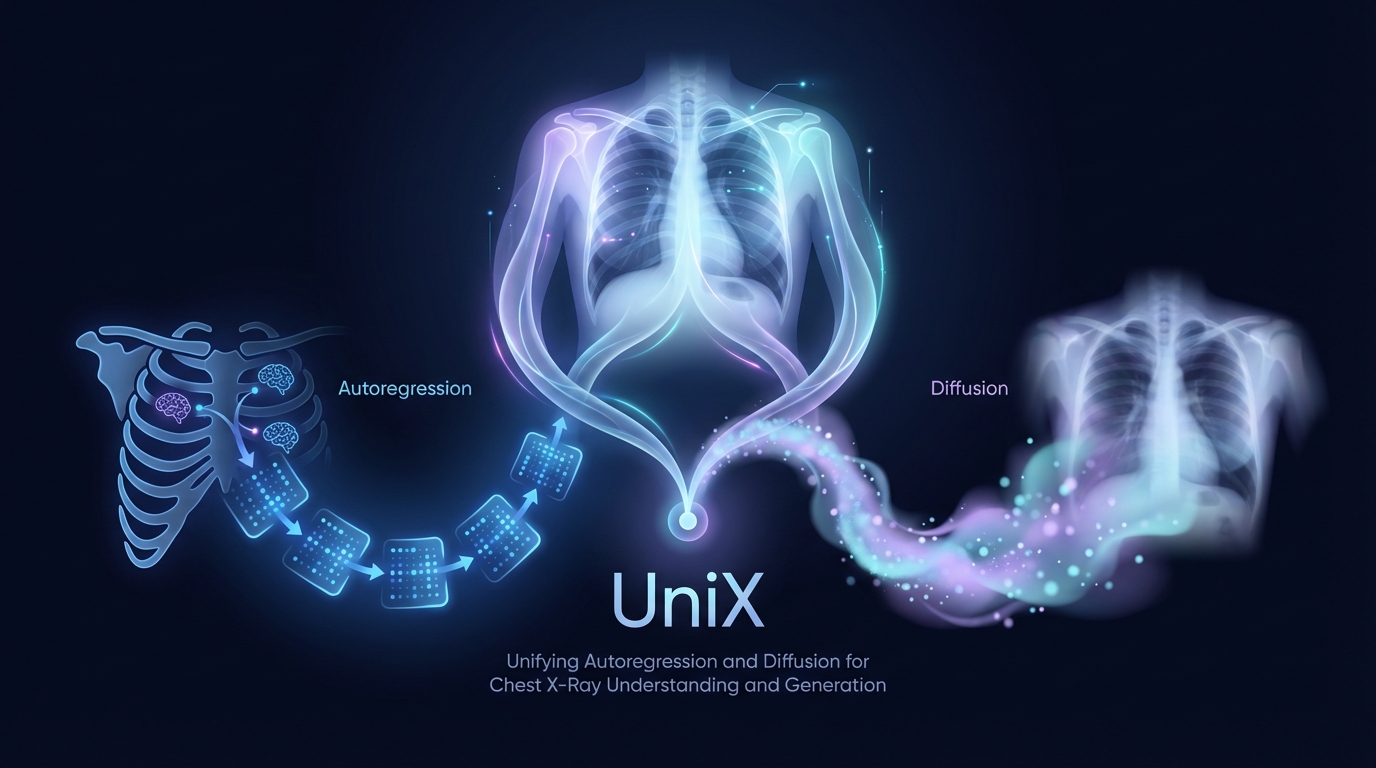

Researchers have introduced UniX, a unified medical foundation model that enhances chest X-ray understanding and generation by separating the tasks into autoregressive and diffusion branches. This approach, featuring a cross-modal self-attention mechanism, yields a 46.1% improvement in understanding and a 24.2% increase in generation quality. UniX operates with just a quarter of the parameters of its predecessor, LLM-CXR, demonstrating comparable performance to task-specific models. Full details and resources are available on GitHub.

UniX Model Revolutionizes Chest X-Ray Understanding and Generation

A new model named UniX has been developed to enhance the understanding and generation of chest X-rays. Unveiled by researchers, UniX separates visual understanding from pixel-level reconstruction, achieving significant advancements in both areas.

Existing models often use parameter-shared autoregressive architectures, struggling to balance semantic abstraction with detailed pixel reconstruction. UniX overcomes these limitations with a dual-branch architecture: an autoregressive branch dedicated to understanding and a diffusion branch focused on high-fidelity generation.

Key Features and Innovations

UniX introduces a novel cross-modal self-attention mechanism that enhances generation by incorporating understanding features. A rigorous data cleaning pipeline and multi-stage training strategy facilitate effective collaboration between the branches.

On benchmark tests, UniX recorded a 46.1% improvement in understanding performance and a 24.2% increase in generation quality, all with a quarter of the parameters compared to the LLM-CXR model.

Impact and Availability

By matching the performance of task-specific models, UniX establishes a new paradigm for the understanding and generation of medical images. Developers and researchers can access the model and its associated codes at GitHub.

Related Topics:

📰 Original Source: https://arxiv.org/abs/2601.11522v1

All rights and credit belong to the original publisher.