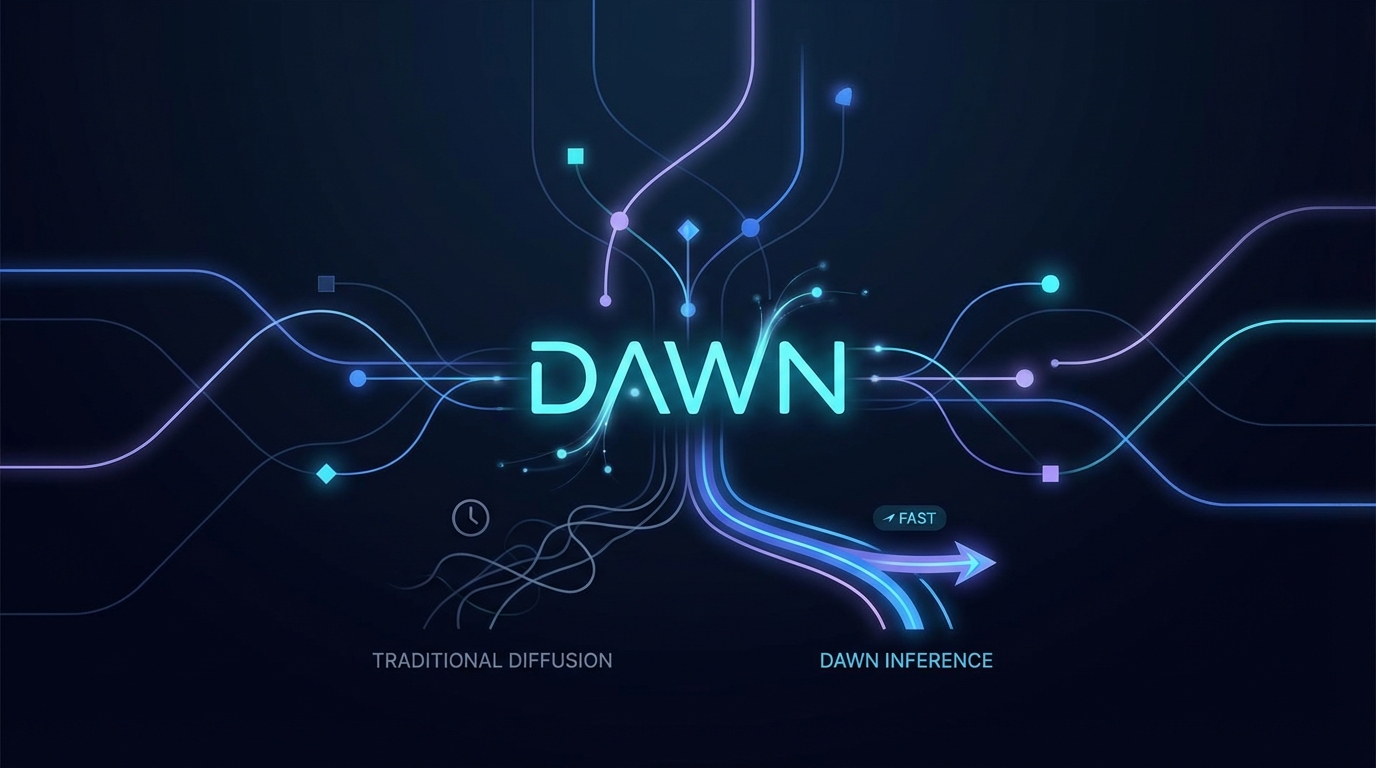

DAWN: Dependency-Aware Fast Inference for Diffusion LLMs

The article introduces DAWN, a new method for improving inference speed in diffusion large language models (dLLMs) without sacrificing output quality. DAWN addresses the inefficiencies of traditional parallel decoding by modeling inter-token dependencies, allowing for more reliable token unmasking. Experimental results show DAWN enhances inference speed by 1.80-8.06x compared to existing methods while maintaining generation quality. The code is available at GitHub for implementation.