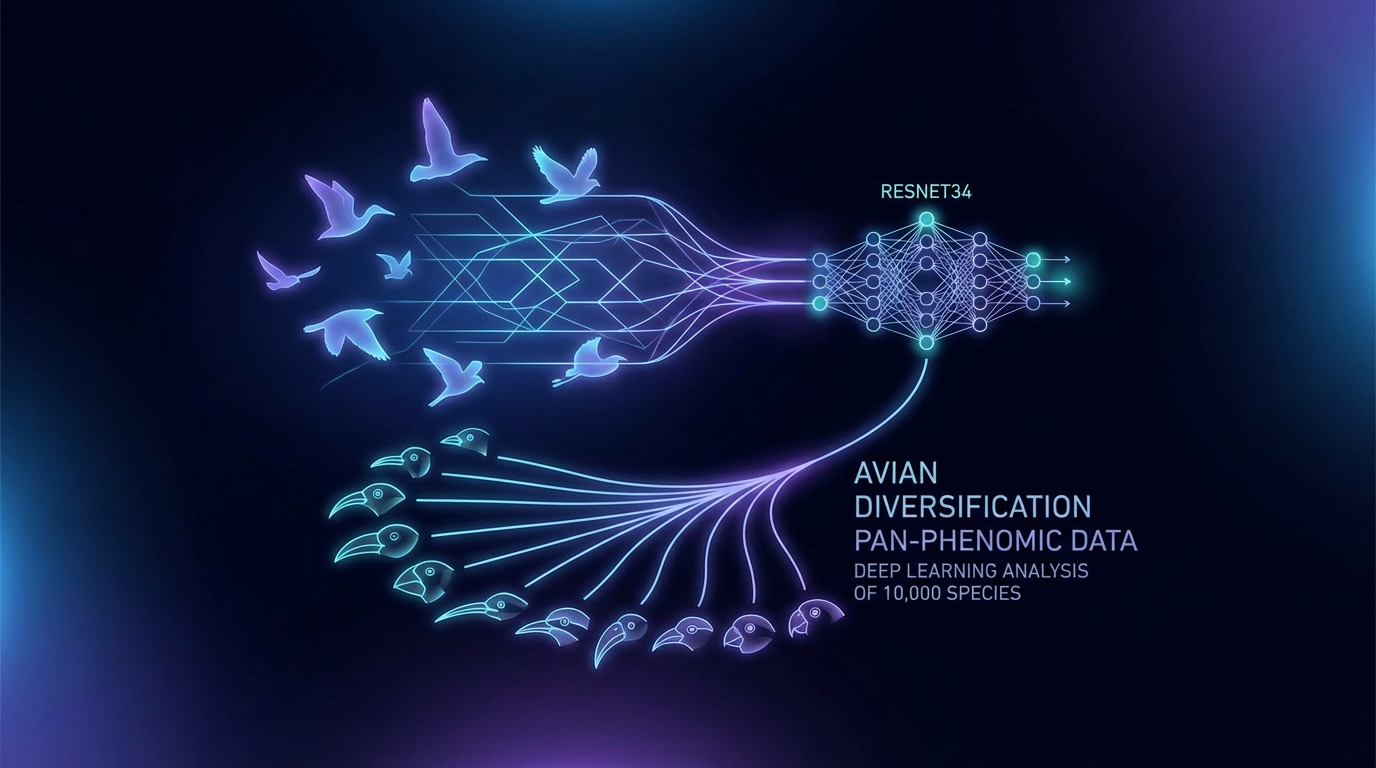

Deep-learning-based pan-phenomic data reveals the explosive evolution of avian visual disparity

A recent study harnesses deep learning, specifically a ResNet34 model, to analyze avian morphological evolution by recognizing over 10,000 bird species. It reveals that the model's high-dimensional embedding space captures phenotypic convergence and morphological disparity linked to species richness, underscoring richness as a key factor in morphospace expansion. Post-K-Pg extinction patterns show an "early burst" in diversity. Notably, the study also highlights the model's ability to form hierarchical structures in a flat-label training context, challenging assumptions about CNNs' reliance on local textures.